The errancy of Medical Pathologists

In the Wisdom of Crowds, James Surowiecki slates medical pathologists, among other "experts", on the grounds that their judgements lack consistency. In the Abacus 2005 edition, this is to be found on page 33. He states "More disconcertingly, one study found that the internal consistency of medical pathologists' judgements was just 0.5, meaning that a pathologist presented with the same evidence would, half the time, offer a different opinion." Based on Surowiecki's statement, one might think that perhaps the pathologist was making a binary decision, benign or malignant, and would guess benign half the time and malignant half the time. That is, one might as well toss a coin. In fact, this figure of 0.5 is a correlation co-efficient, where 0 is random and 1.0 is perfect consistency. If the pathologist behaved randomly, giving opinion A half the time and opinion B half the time, the correlation co-efficient would be 0.0, not 0.5. Surowiecki has taken this information from a paper by Shanteau1 and, to be fair to Surowiecki, he is echoing the opinion of Shanteau that "This [the correlation coefficient of 0.5] suggests a low level of competence."

Shanteau in turn was citing a paper by Einhorn2. Einhorn's paper, now 33 years old, reported a study of just three histopathologists, of who one was a resident (trainee). The pathologists were presented with 193 slides from patients with Hodgkin's disease. The purpose of Einhorn's study was not to assess the accuracy of pathologists' diagnoses but to explore the way in which pathologists quantify various diagnostic features. Einhorn concludes not that these experts are incompetent but that it is difficult to understand the processes underlying their expertise; he finishes by saying "We can only say that in a highly probabilistic world, there may be many routes to the same goal and that there may be more than one way to perform the cognitive tasks involved in judgment." Shanteau and Surowiecki have made perverse use of Einhorn's paper. Let me give you an analogy. People are endowed with a remarkable ability to recognise each other. You would recognise your Aunt Minnie at 100 metres in a crowd. But can you describe her with sufficient accuracy to enable a stranger to recognise her with the same facility? If you cannot, this in no way detracts from your ability to recognise her; it only emphasizes the mysteriousness of this ability.

There is sound published evidence that histopathologists have an error rate of about 2%3. That is, in 2% of cases they make a clinically significant error. In 98% of cases, they get the diagnosis essentially right.

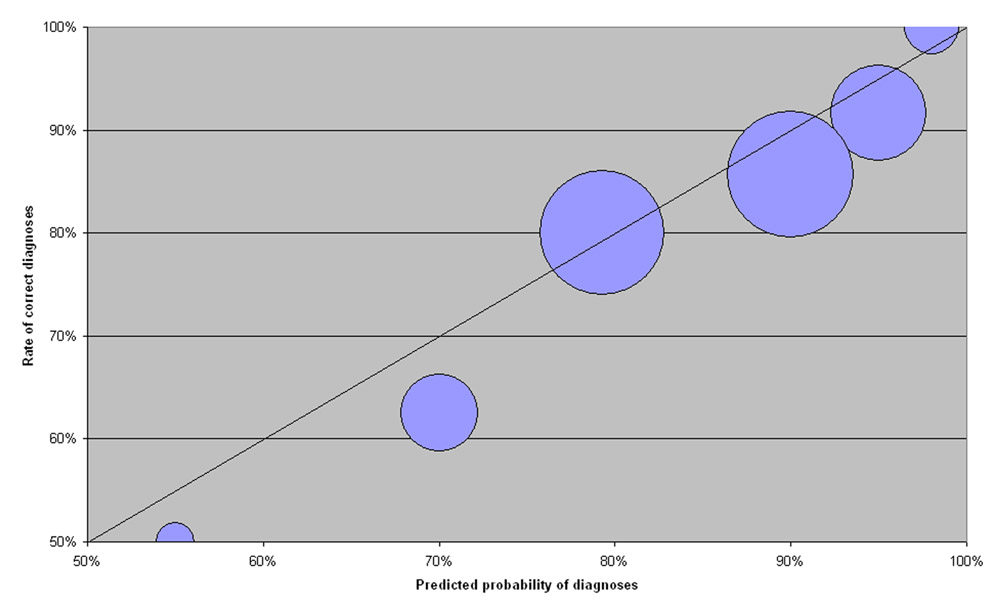

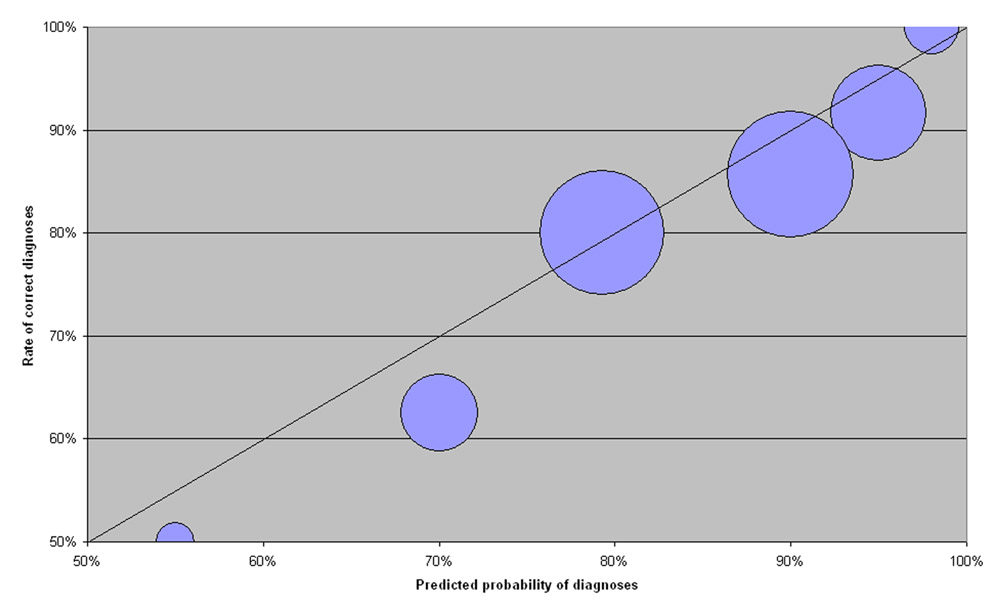

Surowiecki goes on to say that experts, including physicians, are over-confident of their own judgements. It just happens that I have been collecting data on my level of confidence. When I have had a difficult diagnostic problem, I have recorded my opinion and my degree of confidence in my own opinion. In many of these cases, the problem was difficult enough for me to send the case for a further opinion. In other cases, the definitive diagnosis is based on further histological stains or on clinical outcome. Subsequently I have decided, based on the best available evidence, whether I have been right or wrong. If my level of confidence is sound, then for the aggregate of cases where my confidence was 70%, I should be right seven times in ten, not more and not less. In the graph below, I have plotted my rate of correct diagnosis against my confidence. My performance should fall along the diagonal line. The size of the circles are proportional to the number of cases at each point ranging from 2 cases for the smallest circle to 21 cases for the largest. I think you will agree that these points fall fairly close to the diagonal line. My overconfidence is marginal; when I say I am 90% confident of my judgement, I should have said that I was 85% confident. This is hardly gross over-confidence. I hope that this demonstrates that an expert can have a very accurate insight into the accuracy of his degree of confidence.

Galton and the ox

Surowiecki starts his book with a case study by Francis Galton, with a crowd at a fair guessing the weight of a dressed ox. I was puzzled by the apparent discrepancy between Galton's original paper and Surowiecki's account. Since this study is widely cited in reviews of the Wisdom of Crowds, I think it worth going into some detail, although I have not seen any reviews that show awareness of the discrepancy. In the paper as published in Nature, Galton states "the middlemost estimate expresses the vox populi," and "the middlemost estimate is 1207 lb., and the weight of the dressed ox proved to be 1198 lb.; so the vox populi was in this case 9 lb., or 0.8 percent. too high". Note that Galton uses the median (the middlemost) weight, not the arithmetic mean.

Surowiecki in The Wisdom of Crowds states that "among other things, he [Galton] added all the contestants estimates , and calculated the mean of the group's guesses." Surowiecki continues "The crowd had guessed that the ox, after it had been slaughtered and dressed, would weigh 1,197 pounds." [In a radio interview, he gives the figures as 1187 and 1188.] Galton only gave the mean in subsequent correspondence in Nature, in response to a letter from a Mr Hooker, who had estimated the mean from the published percentiles. However, Galton clearly articulates his reasons for preferring the median to the mean. Surowiecki has ignored Galton's argument, choosing the mean instead, presumably because he thinks that it better supports his case for the wisdom of crowds. [In support of Surowiecki, Karl Pearson does not consider the middlemost man to be the best median, the average of almost any pair of symmetrical percentiles gives a result with less probable error.] I am not sure that all of this matters too much; the crowd did impressively well by any measure.

A second issue that emerges from the subsequent correspondence is the question as to whether the ballot was testing the vox populi or the vox expertorum. The question was not resolved in 1907 and certainly cannot be now.

I would like to acknowledge that I found the link to the facsimile of the Nature paper through Ryan Tomayko's site.

References:

1 Domain differences in expertise. James Shanteau. This paper is unpublished but may be found at http://www.k-state.edu/psych/cws/pdf/domain_diffs02.PDF

2 Expert judgement: some necessary conditions and an example. Hillel J. Einhorn. Journal of Applied Psychology 1974, Vol. 59, pages 562-571.

Paul Bishop, Consultant Histopathologist, 100046.1102@compuserve.com